Example

“Show me this month’s sales status”

| State | Behavior | Result |

|---|---|---|

| Base model | Guesses from general AI knowledge | ”I cannot access sales data” |

| Agent (DB + KB connected) | Queries the sales DB + applies report format | Accurate sales data + tabular response |

Agent Processing Pipeline

The agent receives a user question and generates a response through this pipeline. A guardrail validates the input, the Knowledge Base retrieves related documents, tools (API, DB) are invoked when needed, and the LLM produces the final response.Agent vs. Base Model

| Aspect | Base Model | Agent |

|---|---|---|

| Knowledge | Pre-training data only | Internal documents, DB integration |

| Tools | Built-in only | External APIs, MCP servers |

| Response style | Generic | Task-specific guidance applied |

| Security | None | Guardrails validate I/O |

| Consistency | Varies by prompt | Maintained via system prompt |

| Quality monitoring | Manual | Auto-evaluation tracking |

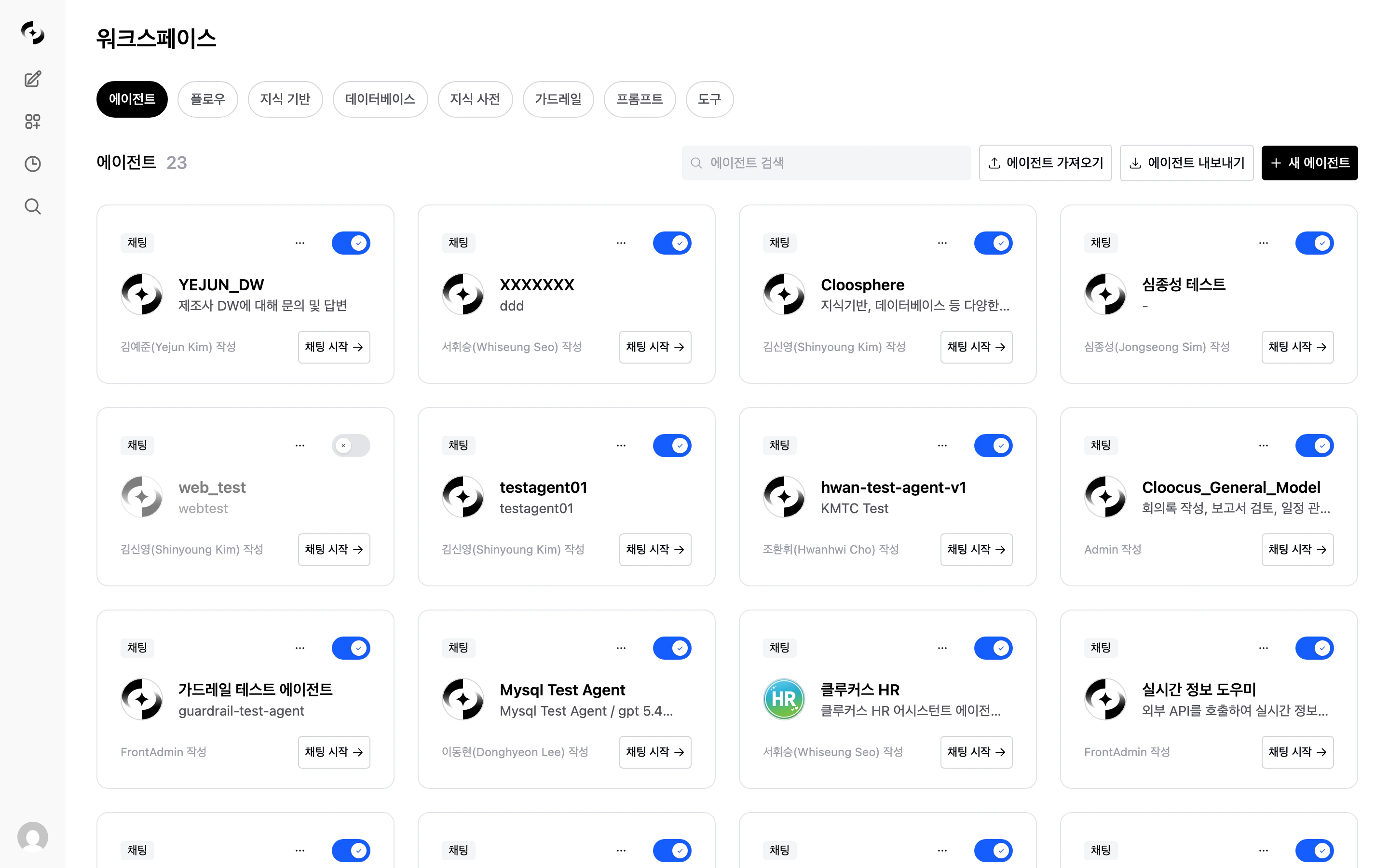

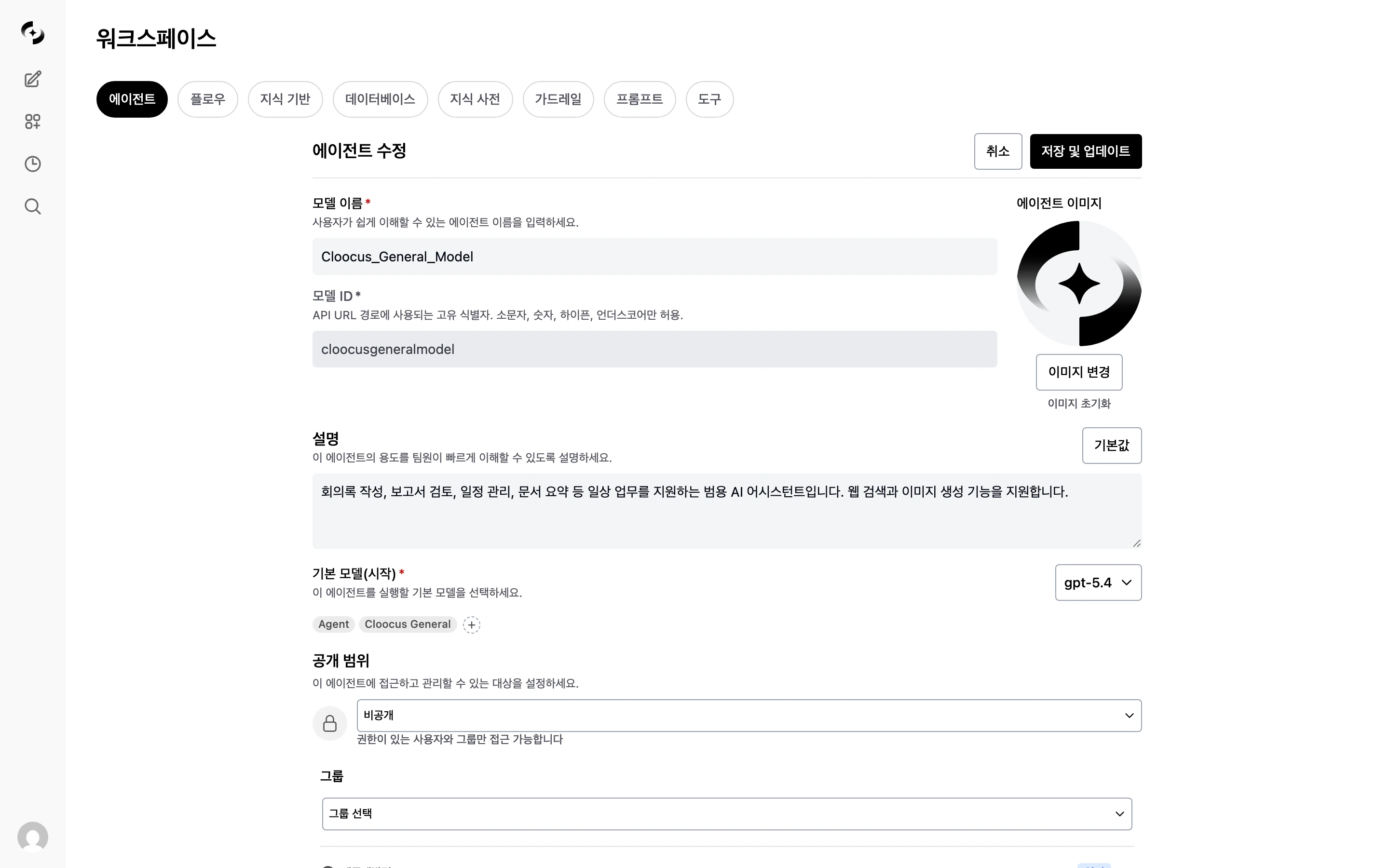

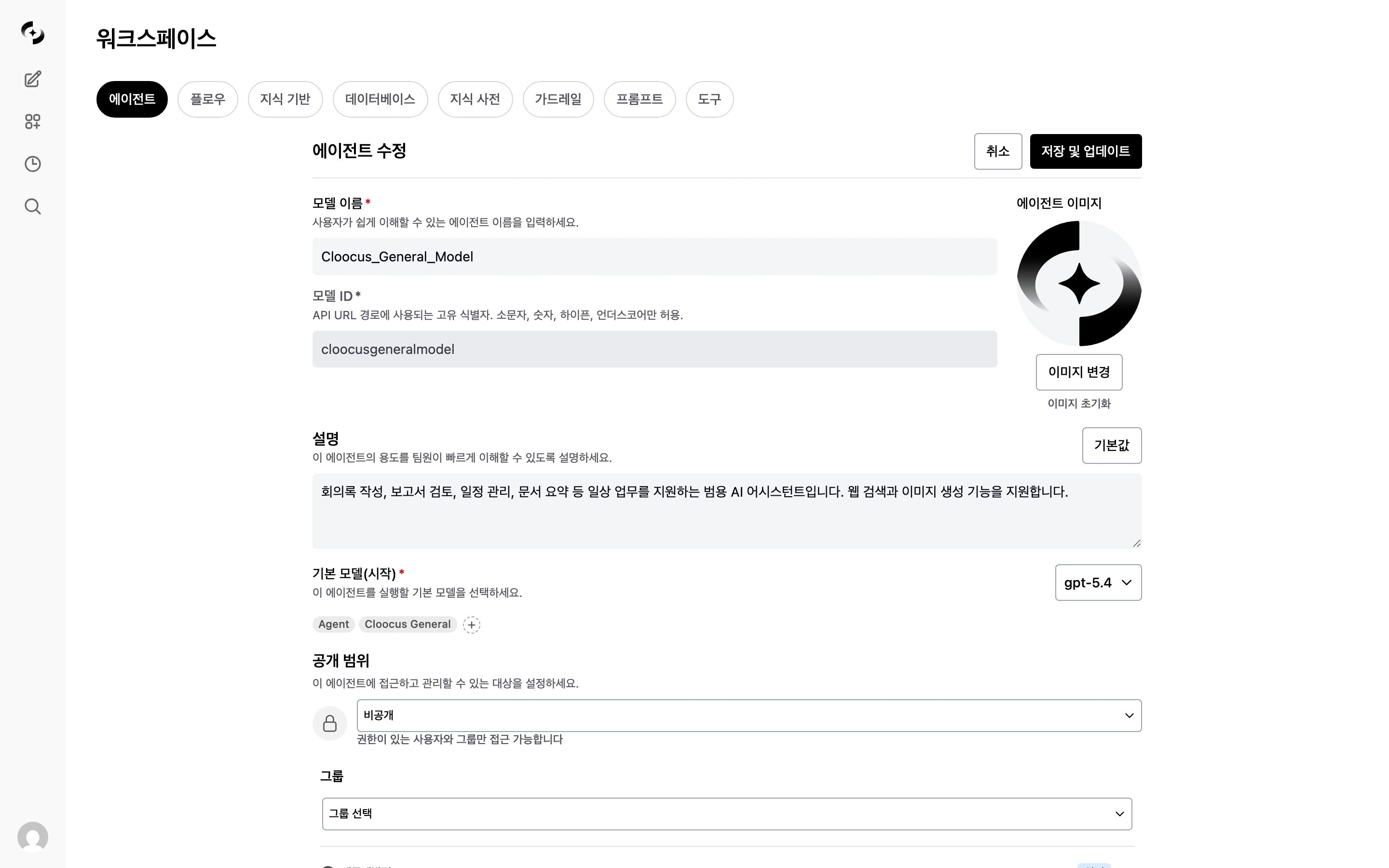

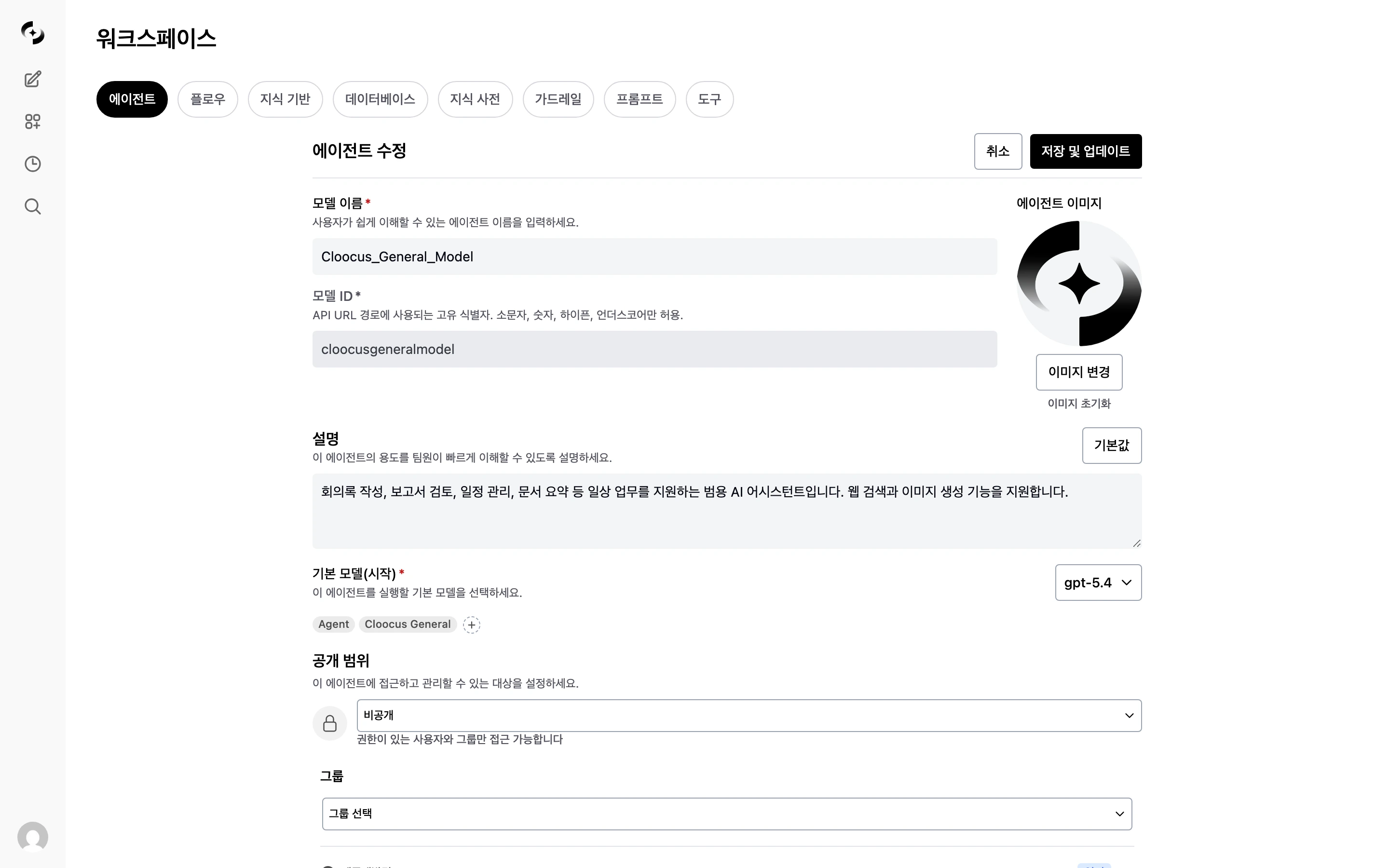

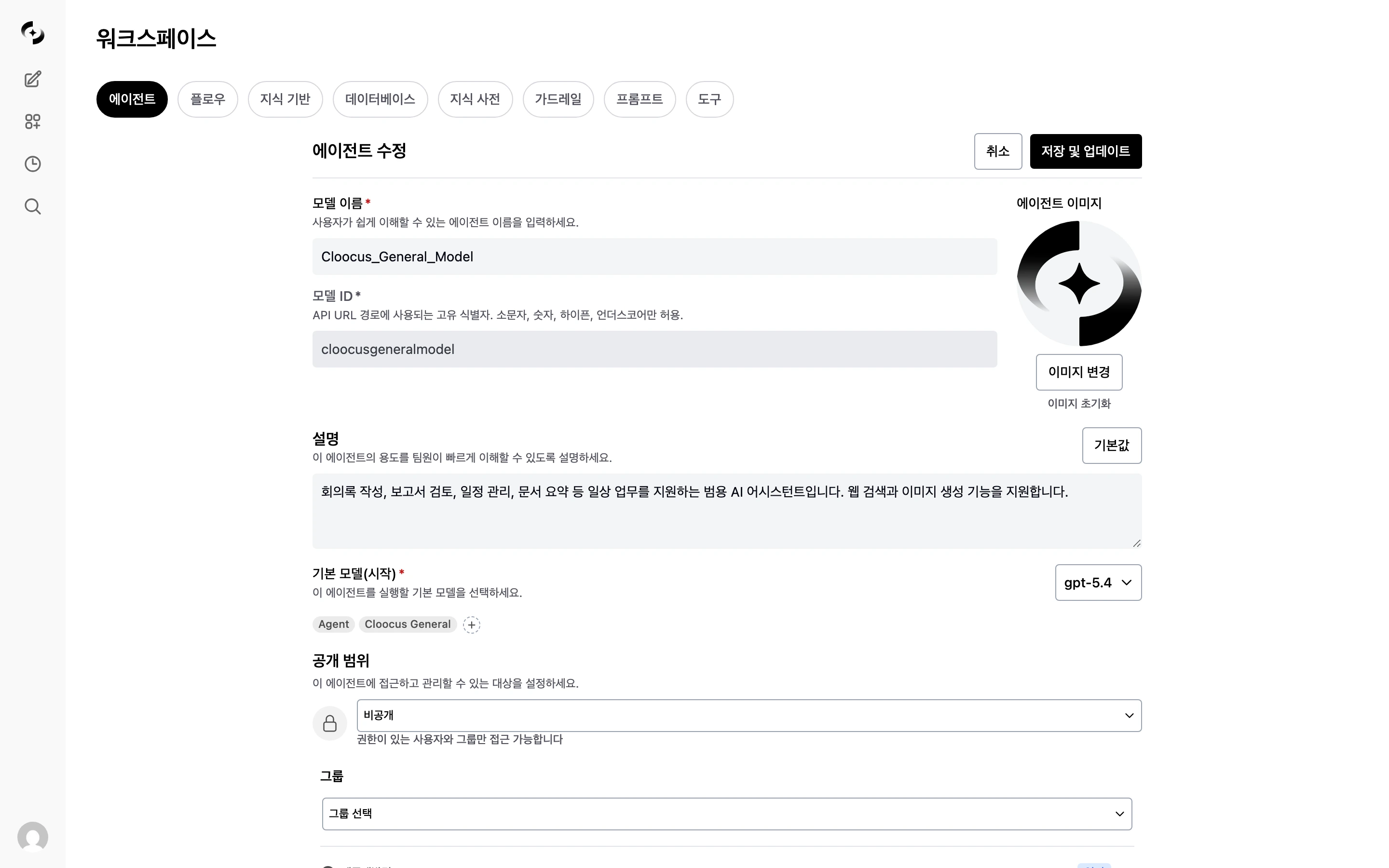

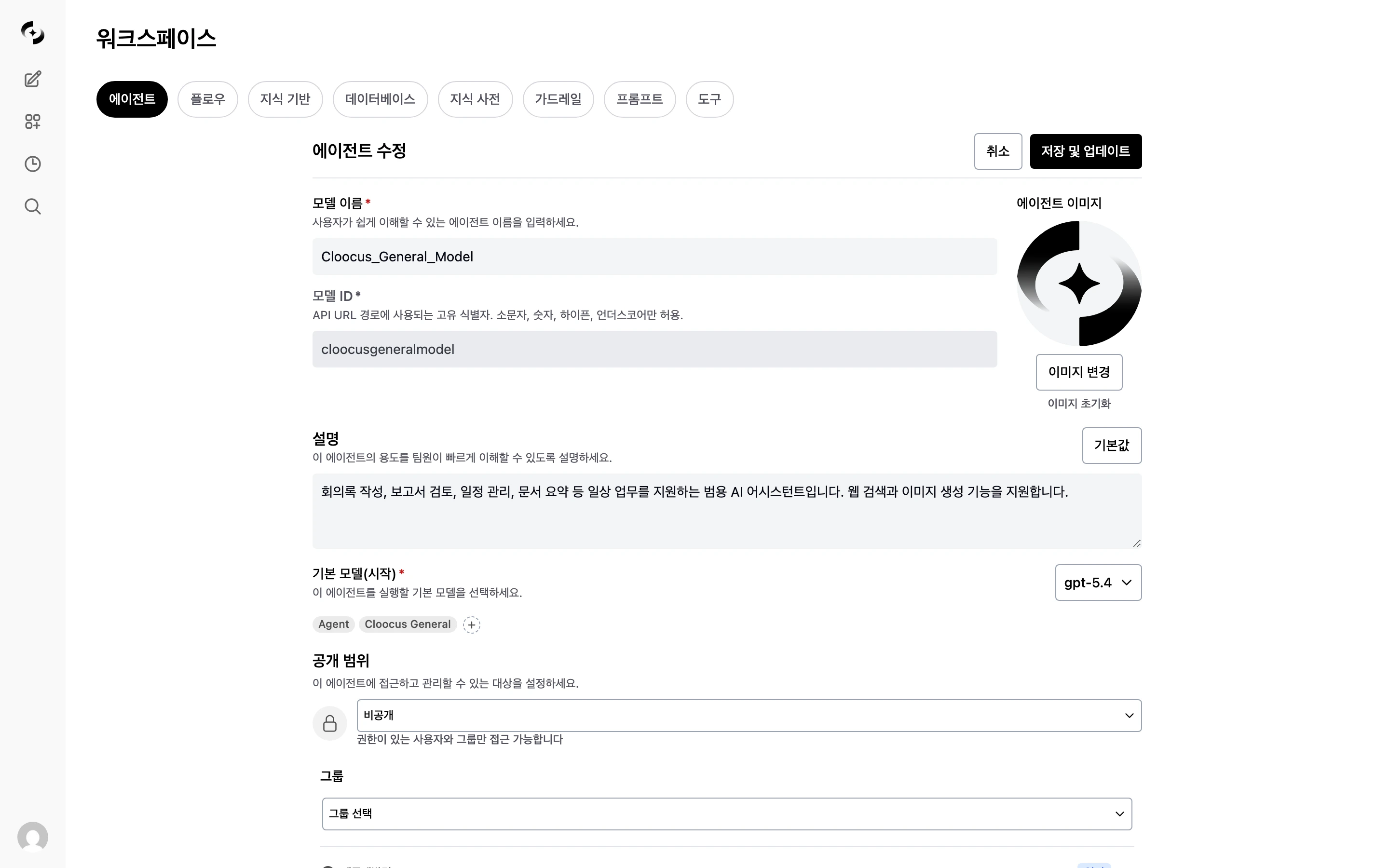

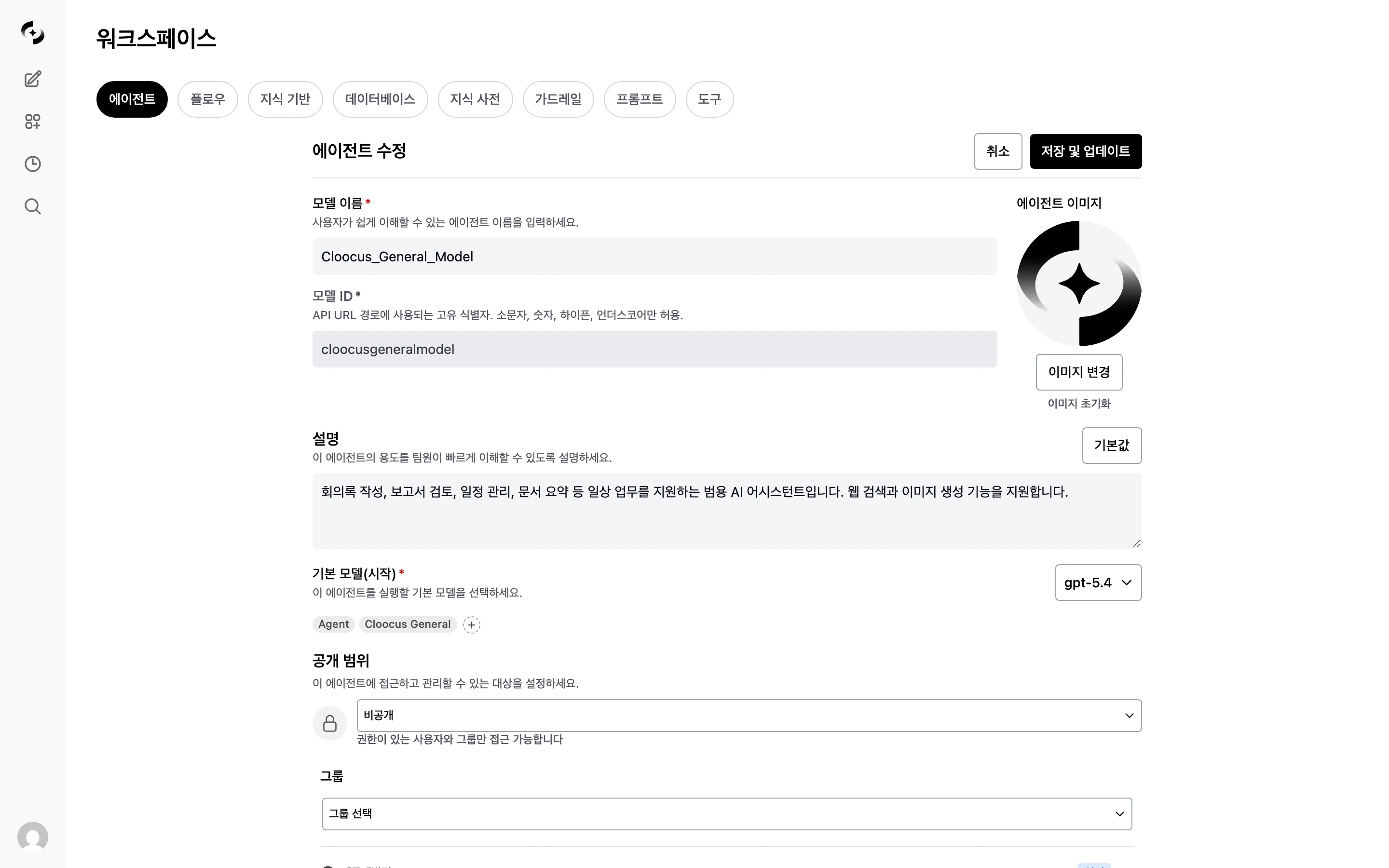

Creating an Agent

Enter basic info

| Field | Description | Example |

|---|---|---|

| Name | Agent display name | ”Marketing Assistant” |

| Description | What the agent does | ”Marketing content creation and analysis support” |

| Profile image | Agent icon | Marketing-related image |

| Tags | Classification tags | marketing, content |

Pick the base model

Write the prompts

| Field | Description |

|---|---|

| Task Prompt | Defines the agent’s role, persona, restrictions, and concrete task instructions. Plays the role of the general system prompt. |

| Response Format Prompt | Specifies the response format and structure (markdown, table, etc.). Separated from the task prompt so format can be managed independently. |

Example of a good task prompt

Example of a good task prompt

Why are the Task Prompt and Response Format Prompt separated?

Why are the Task Prompt and Response Format Prompt separated?

| Task Prompt | Response Format Prompt | |

|---|---|---|

| When applied | While the agent is using tools | When composing the final answer |

| Role | ”What to do” (role, restrictions) | “How to answer” (markdown, tables, length) |

| Include | Role definition, behavior rules, restrictions | Output format, tone, structure |

| Don’t include | Output format specs | Role definition, behavior rules |

Configure prompt suggestions (optional)

| Option | Description |

|---|---|

| Default | Use system default suggestions |

| Custom | Set agent-specific suggestions |

Connect Knowledge Bases

- Click ”+ Add” in the “Knowledge Base” section

- Select Knowledge Bases to connect (multiple supported)

Connect databases (optional)

- Click ”+ Add” in the “Database” section

- Select databases (multiple supported)

Connect glossaries (optional)

- Click ”+ Add” in the “Glossary” section

- Select glossaries (multiple supported)

Connect tools (optional)

| Tool Type | Description |

|---|---|

| OpenAPI server | Interact with external services via REST API |

| MCP server | Tool integration via Model Context Protocol |

Capability settings (optional)

| State | Description |

|---|---|

| Disabled | The capability is completely hidden in chat (default) |

| Default On | Auto-enabled at chat start, user can turn off |

| Default Off | Visible in chat, but user must turn it on |

| Capability | Description |

|---|---|

| Web Search | Real-time web search for up-to-date info. Configurable result count and domain filter |

| Image Generation | AI image generation engine integration. Pick which connection to use |

| Code Interpreter | Run Python code for calculations and data analysis |

Response format (optional)

| Mode | Description |

|---|---|

| Chat | Default freeform text response |

| Structured | Structured response per JSON Schema (Structured Output) |

Guardrail settings (optional)

- Auto-detect and mask PII

- Custom pattern filtering

- Block prohibited words

- LLM-based content validation

Auto-evaluation settings (optional)

| Setting | Description |

|---|---|

| Sampling rate | Share of responses to evaluate (1%–100%) |

| Evaluation type | Choose from retrieval quality, faithfulness, response quality |

| Judge model | LLM to use for evaluation |

Evaluation type details

Evaluation type details

| Type | Description |

|---|---|

| Retrieval Quality | Relevance of documents retrieved from the Knowledge Base |

| Faithfulness | Whether the response is faithful to retrieved content (no hallucination) |

| Response Quality | Overall quality, usefulness, and accuracy of the response |

| Situation | Recommended | Reason |

|---|---|---|

| New agent (validation phase) | 50–100% | Need initial quality picture |

| Stabilized agent | 5–10% | Save costs while monitoring |

| Critical-business agent | 20–30% | Continuous quality assurance needed |

Access permissions

| Option | Description |

|---|---|

| Public | Available to all users |

| Private | Available only to you |

| Group/Organization | Available to specified groups or organizations |

Using Agents

Select in Chat

In the model selector dropdown at the top of the chat, pick an agent. Agents appear in the list alongside regular models.Invoke with @

Call a specific agent in chat with@agent-name.

Agent Management

| Action | Description |

|---|---|

| Activate / Deactivate | Toggle on the agent card to enable/disable. Inactive agents can’t be selected in chat |

| Edit | Modify settings via the edit button or “more” menu on the agent card |

| Clone | Quickly create a new agent by copying an existing one |

| Export / Import | Back up and migrate agent settings between environments via JSON |

| Delete | Permanently delete the agent (no recovery) |

Use Cases

- HR Assistant

- Code Reviewer

- Data Analyst

- Base model: GPT-4o-mini

- Knowledge Base: HR policy, benefits guide

- Task Prompt: HR specialist role

Best Practices

Prompt Writing

- Define the role clearly — “You are a content specialist on Cloocus’s marketing team”

- Provide concrete instructions — response language, length, citation rules, etc.

- Set restrictions — no competitor disparagement, no PII exposure, etc.

Knowledge Base Connection

- Connect only relevant documents: Too many documents actually degrade retrieval accuracy

- Keep documents up-to-date: Refresh stale information regularly

- Write tool descriptions: Detailed tool descriptions for Knowledge Bases improve agent KB selection accuracy

Access Permissions

- Principle of least privilege: Grant access only to those who need it

- Manage by group/organization: More efficient than per-user assignment

- Review periodically: Check permission settings on a regular cadence

FAQ

What's the difference between an agent and a base model?

What's the difference between an agent and a base model?

Can I connect multiple Knowledge Bases to one agent?

Can I connect multiple Knowledge Bases to one agent?

Web Search / Image Generation / Code Interpreter doesn't work

Web Search / Image Generation / Code Interpreter doesn't work

- Disabled: The capability is completely hidden in chat

- Default Off: User must turn it on in the chat input

- Default On: Auto-enabled. If still not working, check admin settings (web search/image generation connections)

Is agent usage tracked?

Is agent usage tracked?

Can I move an agent to another environment?

Can I move an agent to another environment?

Can I hide an agent?

Can I hide an agent?