Example

“What is our company’s annual leave policy?”

| State | Behavior | Result |

|---|---|---|

| No Knowledge Base | Answers from general AI knowledge | Inaccurate or “I don’t know” |

| Knowledge Base connected | Searches hr-policy.pdf for related content, then answers | Accurate policy + citation |

Each Knowledge Base can use a different Document Processing Profile with its own extraction method and chunking strategy. See Document Processing Profile Selection below for details.

RAG Pipeline

Uploaded documents go through this pipeline before becoming searchable.| Stage | Description |

|---|---|

| Apply Profile | Applies the extraction/chunking strategy from the KB’s document processing profile |

| Extract Text | Extracts text from documents (default, OCR, LLM Vision, etc., depending on profile) |

| Chunking | Splits long documents into search-friendly sizes (fixed-size or semantic) |

| Preserve Tables | Keeps tables intact in adjacent chunks instead of splitting (per profile) |

| Preserve Context | Adds an LLM-generated summary of the document context to each chunk (per profile) |

| Embedding | Converts text to high-dimensional vectors |

| Similarity Search | Finds chunks most similar to the question |

| LLM Response Generation | Generates an answer using the retrieved documents as context |

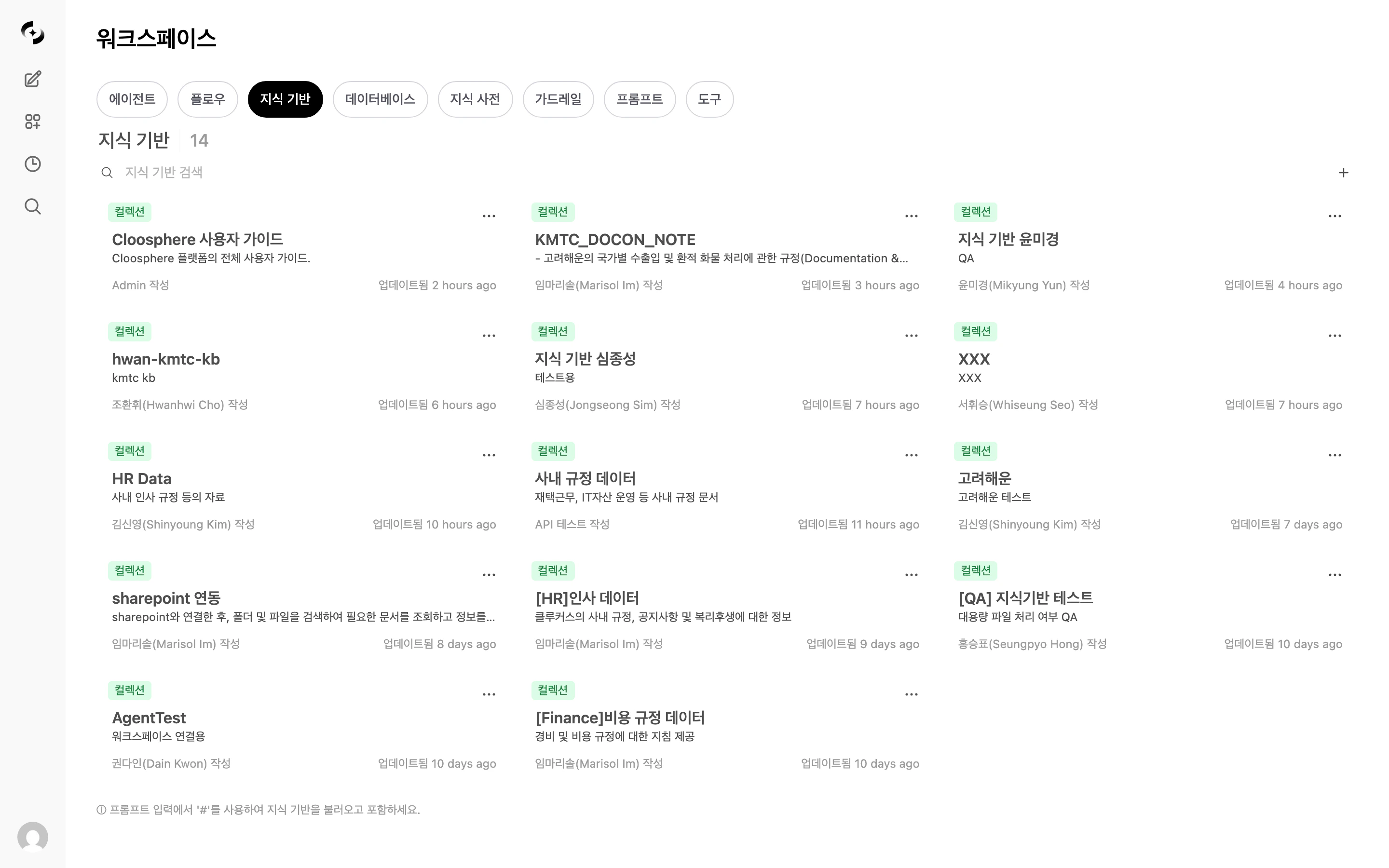

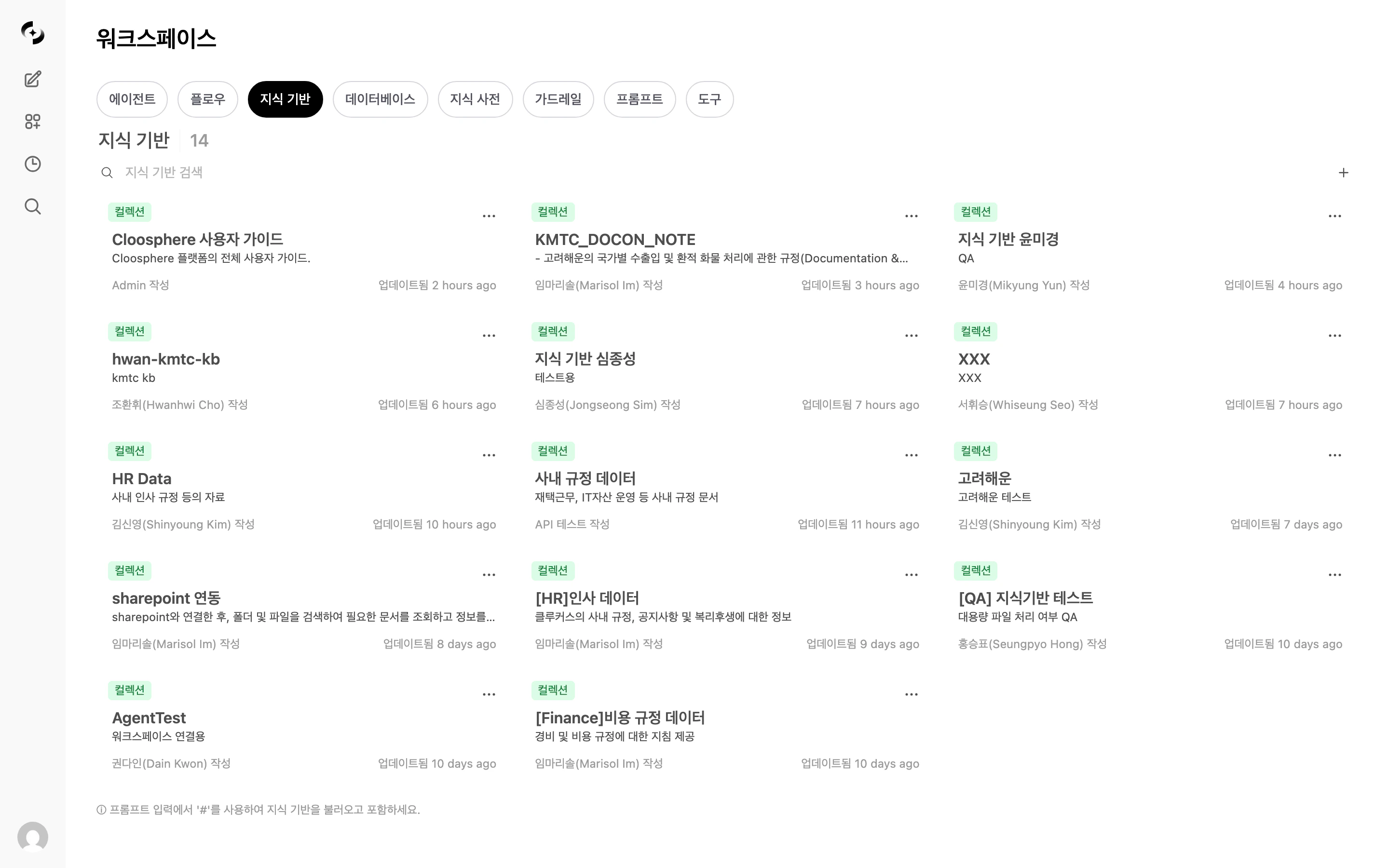

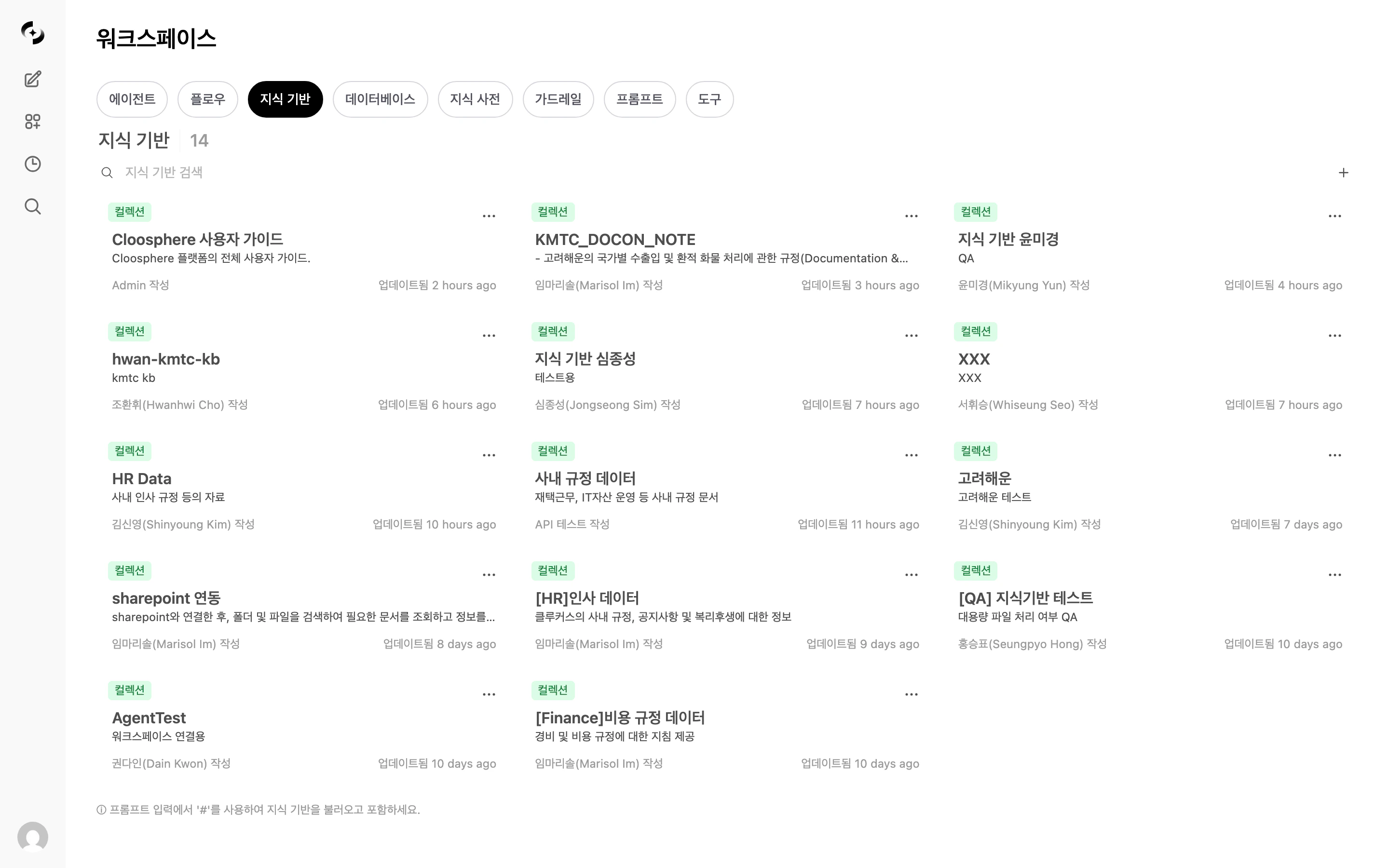

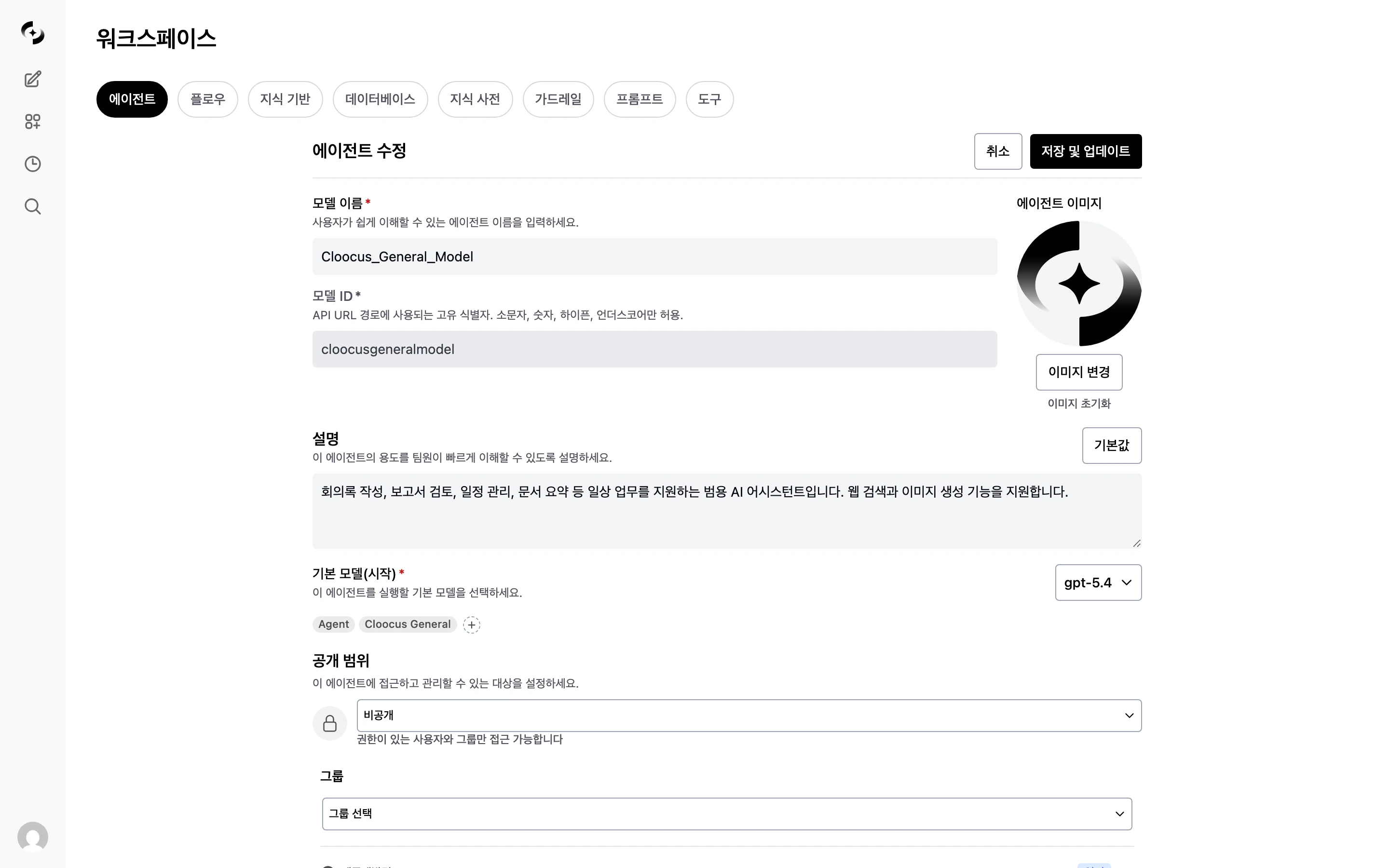

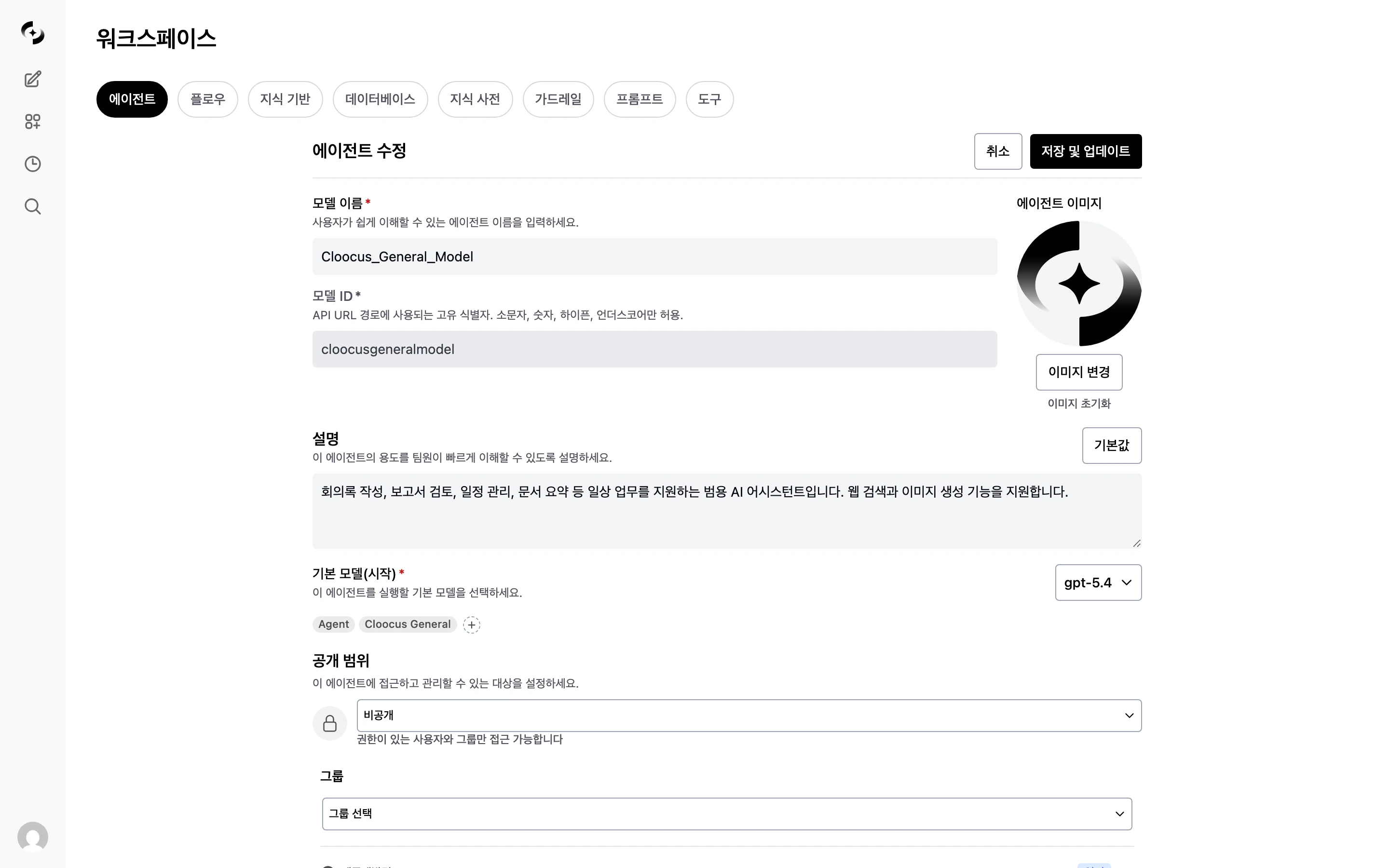

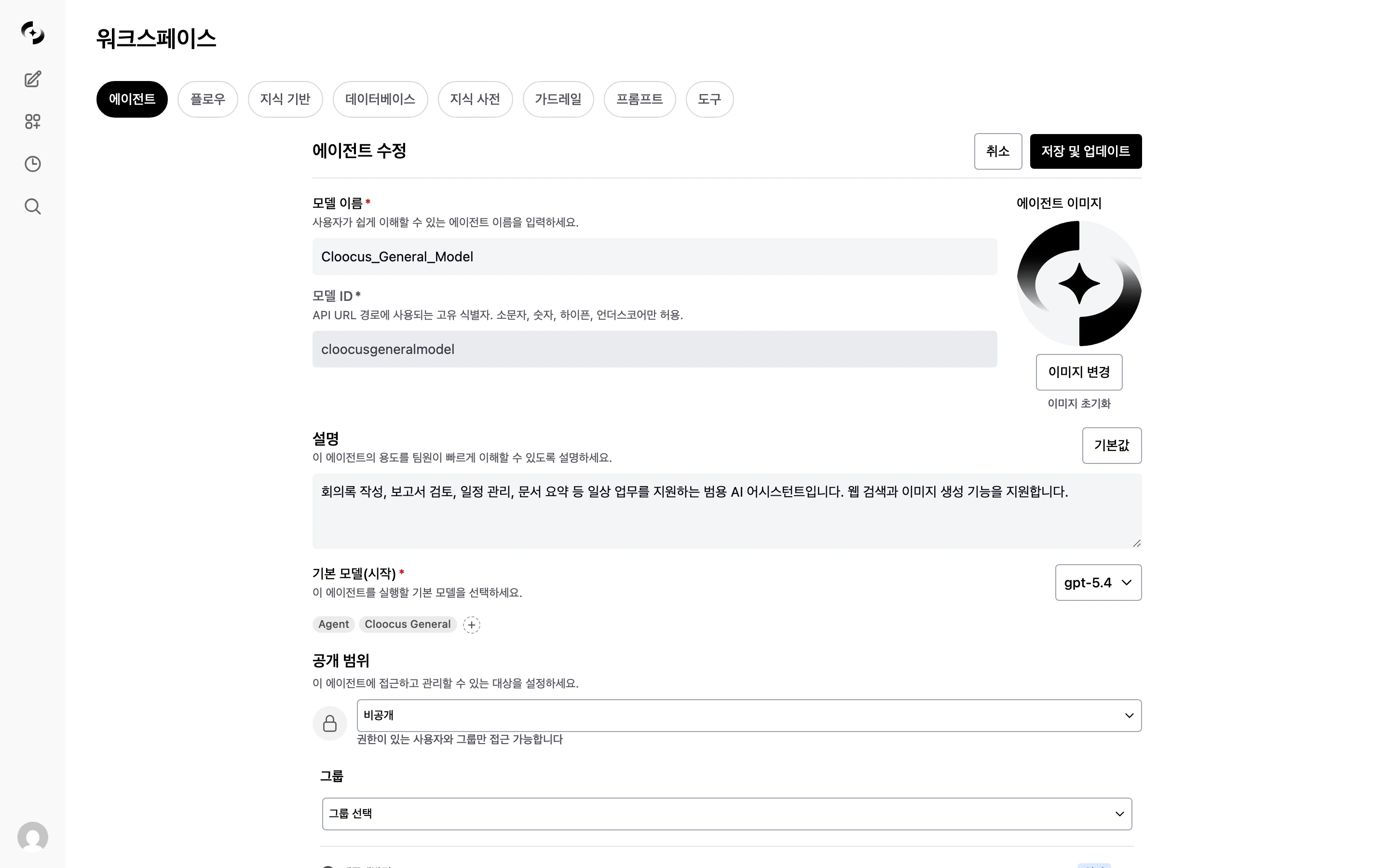

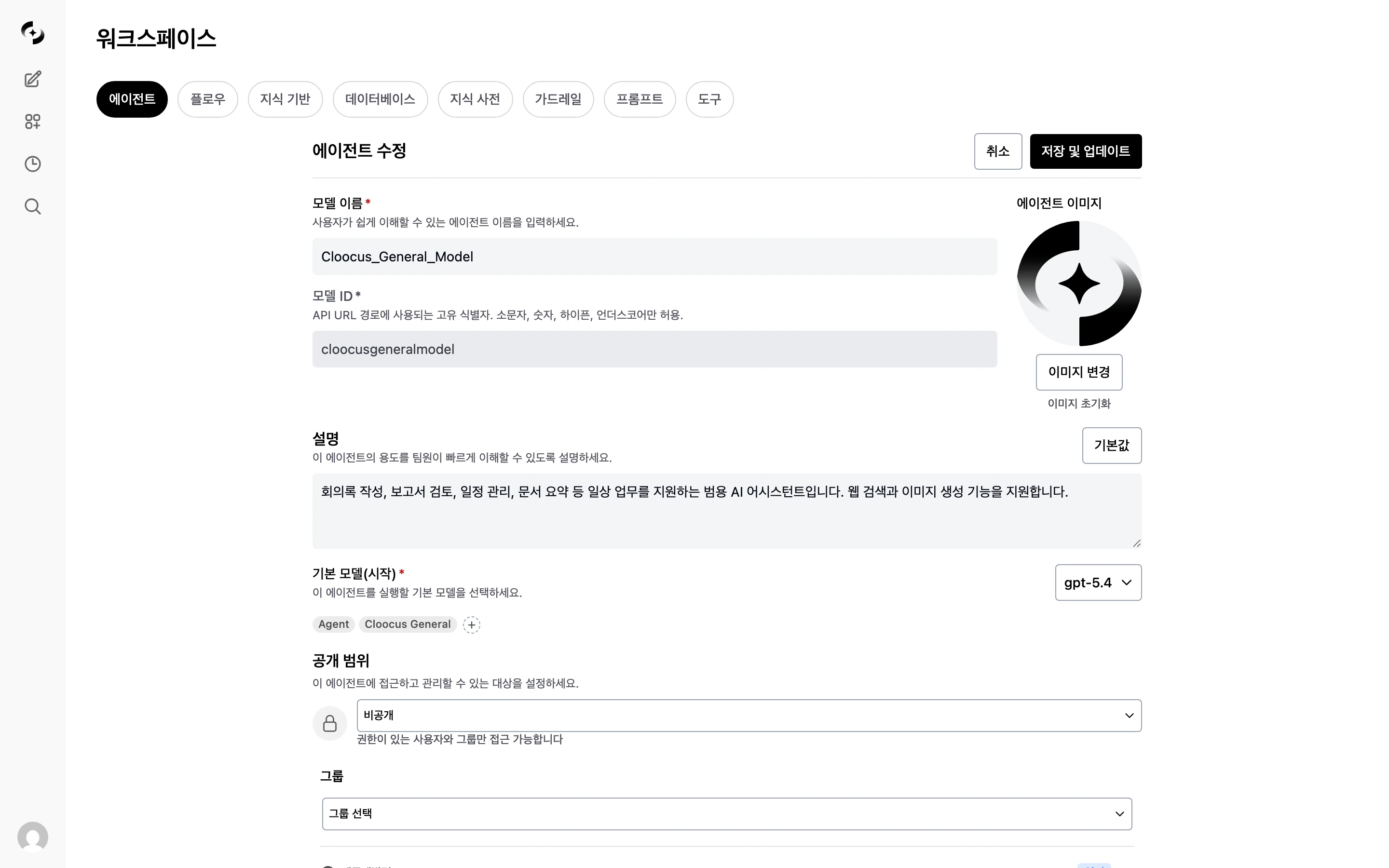

Creating a Knowledge Base

Create a new Knowledge Base

In Workspace > Knowledge Base, click the + button at the top-right.

| Field | Description | Example |

|---|---|---|

| Name | KB name (required) | “HR Policy 2024” |

| Description | Purpose and content (required) | “HR team policies and guidelines” |

| Access | Public/Private and groups/organizational units | Public, or restricted to specific groups/organizations |

| Document Processing Profile | Extraction/chunking strategy applied to this KB | ”Default Extraction”, “LLM Vision High Precision”, etc. |

Upload documents

Add documents to the new Knowledge Base. Click the Add Content (+) button to choose an upload method.

| Method | Description |

|---|---|

| Drag and Drop | Drag files onto the upload area |

| Upload Files | Select “Upload Files” from the “Add Content” menu |

| Upload Directory | Select “Upload Directory” — bulk-upload all files in a folder |

| Add Text | Write text directly to add as content |

| Cloud Storage | Google Drive, OneDrive, SharePoint (visible when admin has configured) |

Wait for processing

Uploaded documents go through text extraction → chunking → embedding → indexing automatically.

A real-time notification appears when processing completes.Bulk uploads:

Files that take more than 10 minutes to process are auto-failed. Delete and re-upload the file in that case.

- 5+ files or directory uploads switch to batch mode

- A progress bar shows steps (upload → processing) at the top, with failures shown in red

- 3 files are processed in parallel

- Progress state persists across page refreshes

Supported File Formats

| Category | Formats | Max Size |

|---|---|---|

| Documents | PDF, DOCX, PPTX, TXT, MD | 50MB |

| Spreadsheets | XLSX, CSV | 20MB |

| Web | HTML | - |

| Code | PY, JS, TS, JSON, YAML | 10MB |

Dynamic Filters

Dynamic filters let you classify documents in a KB by metadata and automatically narrow the search scope.For internal mechanics of dynamic filters — Manual vs. AI comparison, 5-step search flow, and more — see the Dynamic Filters Deep-Dive.

Defining the Filter Schema

Click “Add Filter” in Knowledge Base settings to define filter fields.

| Setting | Description | Example |

|---|---|---|

| Name | Filter field name | ”Department”, “Year” |

| Type | Data type | Enum, Collection, Number, Date |

| Options | List of allowed values (Enum/Collection types) | “Finance, HR, Engineering” |

| Description | Description so the AI understands the filter | ”Filter by the document’s department” |

| Required | Marks files missing this field with a warning | Required check |

Filter Types

| Type | Description | Slot Limit |

|---|---|---|

| Enum | Single selection from predefined options | Max 4 |

| Collection | Multiple selections from predefined options | Max 4 |

| Number | Integer filter | Max 2 |

| Date | Date range filter | Max 2 |

| Document Type (doc_type) | Document type auto-classified from chunk content (policy/guide/report/form, etc.) | Auto |

Per-File Metadata Settings

After defining the filter schema, set metadata values per file. The metadata state is shown by color in the file list.

| Color | Meaning |

|---|---|

| Green | All filter fields have values |

| Yellow | Only some fields are set |

| Orange | A required field is empty |

| Gray border | No metadata set |

| Purple spinner | AI extraction in progress |

When metadata changes, the vector index is updated automatically. Existing vectors are kept — no re-embedding required.

AI Auto-Extraction

When you set an extraction prompt on the filter schema, the LLM analyzes document content and filename at upload time and auto-extracts metadata.Write the extraction prompt

Write an extraction prompt for each filter.

Example: “Extract the country name from the filename”

| Method | Description |

|---|---|

| Auto-extraction | Runs automatically at upload (when AI mode is active) |

| Single extraction | Click the extract button on a file’s metadata edit screen |

| Bulk extraction | Re-extract metadata for all files at once |

Filter Use During Search

When a connected KB has dynamic filters, the AI auto-infers filter conditions from the user’s question to narrow search scope.Tool Description

The tool description is an AI-only description that tells the agent when and in what situations to use this Knowledge Base.Example of a good tool description

Example of a good tool description

Knowledge Base Management

Document Management

| Action | Method |

|---|---|

| Add document | Add Content (+) button or drag-and-drop |

| Delete document | Select a document and click delete |

| View content | Click a document to preview the extracted text |

| Search | Search by filename (server-side, fast even on large KBs) |

| Sort | Newest (default) / Oldest / By name |

| Badge | Meaning |

|---|---|

| Failed (red) | Processing failed — click Retry to retry |

| Processing (orange) | Processing in progress |

| Summary (toggle) | Processed + summary available — click to view inline summary |

Re-uploading a file with the same name shows a duplicate confirmation dialog.

| Option | Behavior |

|---|---|

| Overwrite | Delete the existing file and replace |

| Skip | Skip the duplicate, keep the existing file |

| Cancel | Cancel upload |

Reindexing

To rebuild the vector index for the entire KB, run reindex from Admin Settings > Documents. This is admin-only and processes all KBs at once. When you edit and save an individual file’s content, only that file is automatically re-processed.Using in Chat

- @ Command

- Agent Connection

Reference directly during chat with

@kb-name.Document Processing Profile Selection

New feature — Apply different document processing strategies (extraction engine, chunking method, table preservation) per Knowledge Base.

Profile Use Cases

| Knowledge Base | Recommended Profile | Reason |

|---|---|---|

| HR policy (text PDF) | Default Extraction | Plain text, no extra cost |

| Financial report (many tables) | Table Preservation enabled | Improves table data search accuracy |

| Scanned documents (image PDF) | LLM Vision | Accurately extracts text inside images |

| Technical docs (long reports) | Semantic Chunking + Context Preservation | Topic-based separation + preserved opening context |

Advanced Settings

Adjust document processing and search parameters in admin settings.These settings are global defaults for KBs without a Document Processing Profile. KBs with a profile use the profile settings instead.

Document Processing Options

| Setting | Description | Default |

|---|---|---|

| Chunk Size | Document split unit (characters) | 1000 |

| Chunk Overlap | Overlapping characters between chunks | 100 |

| OCR Enabled | Extract text from images | Enabled |

Content Extraction Engine

Choose the engine for extracting text from documents in admin settings.| Engine | Strengths | Best For |

|---|---|---|

| Default (PyPDF/Langchain) | No setup needed | Plain-text PDF, DOCX |

| Tika | Server required, supports many formats | Mixed file formats |

| Docling | Server required | Complex layout documents |

| Azure Document Intelligence | Azure subscription required, high-precision OCR | Scanned documents, table-heavy PDFs |

| Google Document AI | GCP subscription required | Documents with embedded images |

| Mistral OCR | Mistral API required | PDF OCR |

| LLM Vision | Vision LLM-based, high precision | Complex layouts, charts |

Embedding Engine

| Engine | Strengths |

|---|---|

| Local (SentenceTransformer) | No external transmission, strong security |

| OpenAI | High quality, API costs |

| Azure OpenAI | Optimized for enterprise |

| Ollama | Local server, custom models |

Search Settings

Search settings have two layers — global (admin) and per-KB.| Setting | Default | Description |

|---|---|---|

| Top K | 10 | Chunks to retrieve via vector search |

| Reranker Top K | 3 | Final chunks after reranking |

| Reranker Threshold | 0.1 | Minimum reranker score (lower = more pass through) |

Per-KB Document Summary Settings

Control document summary generation per KB. Configure under the “File Summary” section in the search settings modal.| Setting | Description | Default |

|---|---|---|

| Enable File Summary | Auto-generate AI summary on file processing completion | On |

| Summary Model | LLM model for summarization | Uses Task Model |

Question Generation (Multi-Vector Search)

When question generation is enabled, the LLM pre-generates “questions a user might ask to find this content” for each chunk and stores them as separate vectors.| State | Search Method | Effect |

|---|---|---|

| Disabled | Content vectors only | Standard search |

| Enabled | Weighted sum of content + question vectors | Improved accuracy with user-question-style phrasing |

Best Practices

Document Preparation

- Clean format: Distinguish titles and subheadings clearly with consistent styling

- Keep current: Update documents regularly and remove old ones

- Right size: Split large documents by topic and group related content together

Knowledge Base Composition

- Separate by topic: Create separate KBs for “HR Policy”, “IT Guide”, “Product Manual”, etc.

- Granular access: Manage sensitive info separately and restrict access by department

- Write tool descriptions: Detailed tool descriptions help agents auto-pick the right KB

Use Cases

New Employee Onboarding

New Employee Onboarding

Build Knowledge Bases of HR policy, work manuals, and IT guides so new hires can adapt quickly by asking the AI.

- Knowledge Bases: “HR Policy”, “Work Manual”, “IT Usage Guide”

- Connect 3 KBs to the agent

- Differentiate KB purposes via tool descriptions

Customer Support Automation

Customer Support Automation

Build Knowledge Bases of product manuals, FAQs, and technical docs to provide accurate answers to customer inquiries.

- Dynamic filters: filter by product name, version

- Agent: customer-support system prompt + Knowledge Base connection

- Use citation display to ensure trust

Per-Department Policy Management

Per-Department Policy Management

Classify per-department documents with dynamic filters and search the right policies by department.

- Dynamic filters: department, year, document type

- AI auto-extraction for metadata

- Access control to protect sensitive documents

FAQ

Are there KB capacity limits?

Are there KB capacity limits?

By default, there’s no limit on file count or capacity. Admins can set limits via environment variables.

What happens if I re-upload the same file?

What happens if I re-upload the same file?

When a file with the same name is detected, a duplicate confirmation dialog appears with Overwrite, Skip, or Cancel options.

Is text inside PDF images recognized?

Is text inside PDF images recognized?

The default extraction engine supports OCR, but for scanned documents or image-heavy PDFs, Azure Document Intelligence or Google Document AI engines are more accurate. Check the extraction engine setting with your admin.

Does changing dynamic filter metadata require re-embedding?

Does changing dynamic filter metadata require re-embedding?

No. Metadata changes only update the vector index’s filter fields; existing vectors stay intact. Processed quickly without re-embedding.

What happens to search settings when multiple KBs are connected to one agent?

What happens to search settings when multiple KBs are connected to one agent?

When multiple KBs are connected, search settings merge as follows:

- Top K, Reranker Top K: Use the largest value across KBs

- Reranker Threshold: Use the lowest value across KBs (more results pass)

Does changing the Document Processing Profile affect existing documents?

Does changing the Document Processing Profile affect existing documents?

Existing documents’ vectors are not auto-reprocessed when the profile changes. To apply the new profile to existing documents, delete and re-upload them, or run a full reindex from admin settings.

What's the cost of LLM Vision or Context Preservation?

What's the cost of LLM Vision or Context Preservation?

- LLM Vision: page count × ~2 LLM calls (extraction + boundary correction)

- Context Preservation: chunk count × 1 LLM call

Related Pages

Dynamic Filters Deep-Dive

Filter internals, Manual vs AI, the 5-step search flow

Knowledge Graph

Connect Knowledge Bases + glossaries + DBs into one graph

Agents

Connect a Knowledge Base to an agent

Glossary

Improve AI understanding by defining domain terms